AI Lawyer Blog

Age Verification & Privacy Risk: Guide for In-House Counsel

Greg Mitchell | Legal consultant at AI Lawyer

3

If your company is planning an age-check flow, the real risk is not just letting minors into the wrong part of the product. It is building a system that tries to solve that problem by collecting far more personal data than the product decision actually needs.

That is the legal tension for in-house counsel. The question is not whether age verification sounds responsible. The question is whether the company can prove only what it needs to know, keep that data under tight control, and avoid creating a new privacy problem in the name of reducing another one.

Why age verification is becoming a legal priority

Age verification has moved from a niche compliance issue to a live regulatory one. The FTC’s Age Verification Workshop on January 28, 2026 and the FTC’s February 25, 2026 COPPA policy statement made that shift hard to ignore. The agency is not treating age assurance as a theoretical policy debate. It is treating it as a practical tool companies may need to use when children are on the platform.

The pressure is also coming from state law. Utah’s App Store Accountability Act requires app store providers to verify a user’s age category and adds parental-consent mechanics for minor accounts. Not every state has chosen the same model, but the direction is clear: lawmakers increasingly expect digital services to know enough about age to apply restrictions, safer defaults, or parental controls.

Federal baseline rules already matter here. Under COPPA, operators of child-directed services and operators with actual knowledge they collect personal information from children under 13 face specific obligations. That does not mean every company now needs the same age-verification stack. It does mean “we do not really know who is a child user” is getting harder to defend as a long-term position.

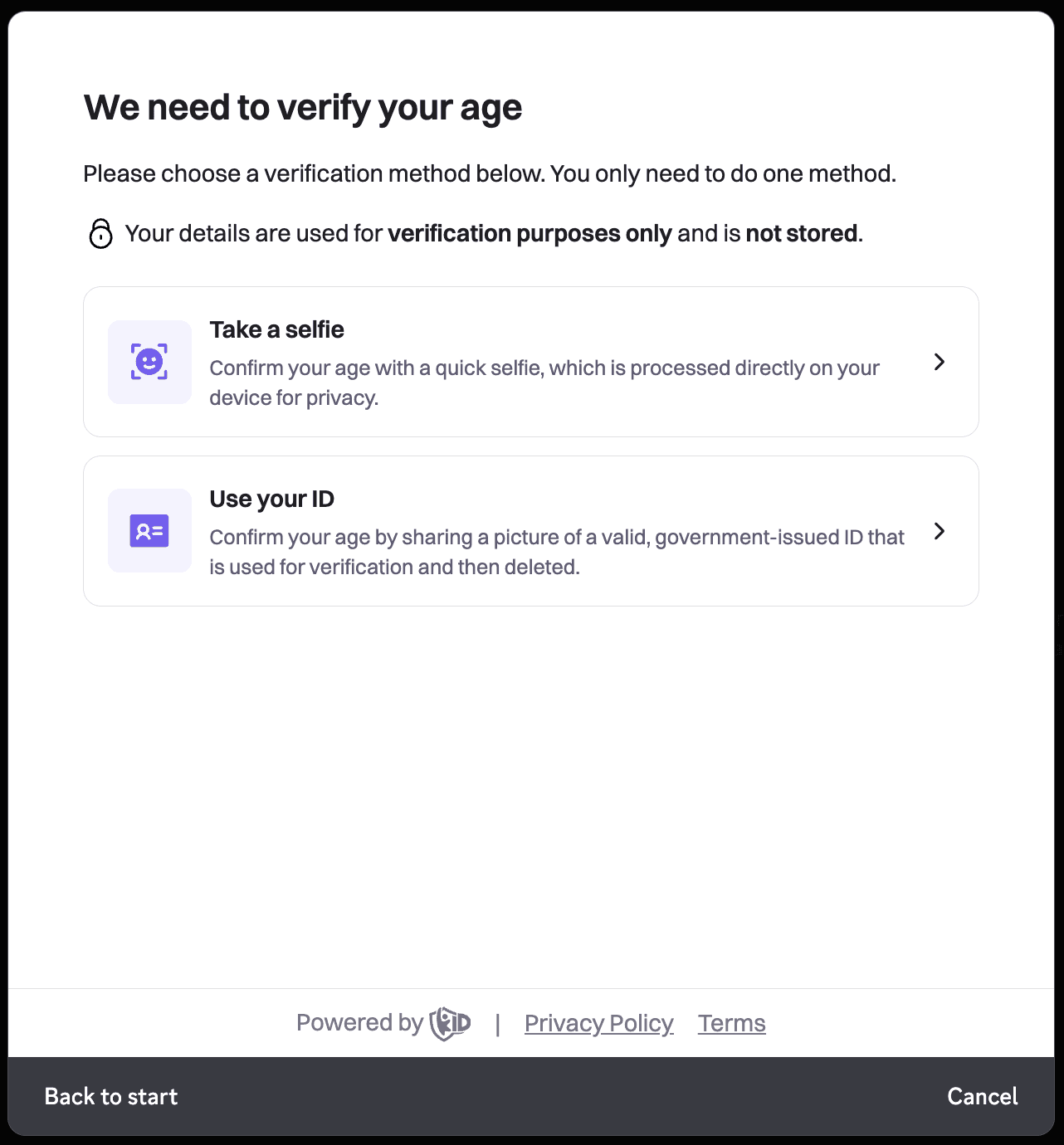

The real legal problem is not age checks — it is overcollection

Age verification sounds narrow. In practice, it often is not. A team starts with a simple question — is this user over 13, 16, or 18? — and ends up collecting exact date of birth, ID scans, selfies, or persistent verification records. That is where the risk expands. If the product only needs a threshold answer, building an identity-grade intake flow is much harder to justify. The logic in NIST SP 800-63-4 is useful here: assurance should be calibrated to risk, not driven by a blanket preference for maximum certainty.

The same point shows up in the FTC’s February 25, 2026 COPPA policy statement. Even while encouraging age-verification tools, the FTC tied that position to familiar privacy controls: use the data for age assurance, limit disclosure, secure written commitments from vendors, and delete the information when it is no longer needed. That is not a green light for maximal collection. It is a reminder that child-safety goals do not erase data-minimization duties.

For legal teams, the practical question is blunt: what is the minimum data needed to make this product decision? If the answer is “an age band” or “pass/fail above a threshold,” exact DOB, raw ID images, and reusable biometric inputs may be more than the company can defend. Once that data enters the system, it tends to spread — into fraud, analytics, account recovery, or vendor workflows. A system that verifies age by collecting more data than the feature requires is not a safer system. It is a more legally exposed one.

Questions in-house counsel should ask before launch

Before launch, counsel should not start with the vendor demo. Start with scope. The first question is what problem the company is actually trying to solve. That is consistent with the risk-based approach in NIST’s Digital Identity Guidelines and with the privacy controls built into the FTC’s February 25, 2026 COPPA policy statement. If the harm is limited to one feature, the company may not need the same level of age assurance across the whole product.

A useful pressure test is this:

What specific harm are we trying to prevent?

Do we need exact age, or only whether the user is above or below a threshold?

What level of certainty do we actually need for this feature?

Does every user need the same verification flow, or only users trying to access higher-risk functionality?

What less invasive options did we reject, and why?

Then move to data mapping. The FTC’s policy statement focuses on limited use, controlled disclosure, reasonable security, and prompt deletion. That makes the next set of questions unavoidable:

What personal data is collected, generated, stored, or shared?

What does the company actually receive, and what stays with the vendor?

Are we collecting exact DOB, raw ID images, selfies, or biometric inputs when a narrower output would do?

How long is each data element retained?

Is the data used only for age assurance, or also for fraud, analytics, model training, or profiling?

The final set of questions is about governance. ICO age-assurance guidance is not U.S. law, but it is useful on design discipline: purpose limitation, access controls, restricted reuse, and review of error rates. Counsel should ask whether there is a path to challenge a bad result, whether sensitive source data is separated from persistent account data, and whether the team has documented necessity, proportionality, access limits, retention, and deletion. If the company cannot explain why this level of collection is necessary, it is not ready to launch.

What makes an age-verification program legally risky

The biggest red flag is mismatch. If the feature only requires a threshold decision, but the system collects exact DOB, government ID images, selfies, or reusable biometric inputs, the program is probably overbuilt. That cuts against the risk-based logic in NIST SP 800-63-4 and the guardrails in the FTC’s February 2026 COPPA policy statement, which ties enforcement discretion to narrow purpose, limited disclosure, reasonable security, and prompt deletion.

The next red flag is sprawl. The company cannot clearly say what the vendor sees, what the company receives back, how long raw evidence is retained, or whether the data will later be reused for fraud, analytics, account recovery, or model training. Once age-check data starts moving beyond the original decision, the program stops looking like a child-safety control and starts looking like a secondary-use problem. That is exactly why the ICO’s age-assurance guidance puts so much weight on purpose limitation and data protection by design.

A third red flag is blunt deployment. The same heavy flow for every user, every feature, and every risk level usually signals weak scoping, not strong governance. Add no meaningful retention limits, weak vendor oversight, no internal access discipline, and no path to challenge a bad result, and the legal case gets much harder to defend. A risky age-check program is not just intrusive. It is a program that cannot explain why this level of collection is necessary in the first place.

What a lower-risk age-verification model looks like

A lower-risk model starts with a narrower output. If the product only needs to know whether a user is above or below 13, 16, or 18, the system should be built to return that threshold answer — not a full identity package. That matches the risk-based logic in NIST’s Digital Identity Guidelines and the FTC’s own framing in its February 2026 COPPA policy statement: use age-assurance tools, but limit use, sharing, retention, and vendor access to what is actually necessary.

The next piece is segmentation. Not every feature needs the same level of age assurance. A product may justify a lighter flow for general access and a stronger one only for higher-risk functions, such as access to adult content, direct messaging with unknown adults, or purchases. That is usually easier to defend than one heavy verification flow applied to every user, every time. The ICO’s age-assurance guidance is useful here because it treats proportionality as a design question, not just a policy slogan.

A safer model also keeps source data on a short leash. If a vendor must process an ID image or selfie, the company should ask whether it can receive only a pass/fail or age-band result, not the raw evidence. Retention should be short. Reuse should be blocked. Internal access should be narrow. And the program should include a way to challenge bad results, because false positives and false negatives are not edge cases in age assurance — they are part of the operating reality. A lower-risk program is not the one with the most data. It is the one that proves only what the product needs and then closes the door on everything else.

Why legal, privacy, product, and compliance need a shared model

A sound age-verification program is not a legal-only project. Legal may define the risk tolerance, but product decides where the check appears, privacy decides what data should exist at all, security decides how it is protected, procurement decides what the vendor can see, and trust and safety decides what happens after a user is classified. That kind of coordination is exactly what the NIST Privacy Framework is built to support: privacy risk management that connects business objectives, organizational roles, and implementation choices rather than leaving each team to optimize in isolation.

That matters even more in age assurance because the failure modes sit in different functions. The FTC’s 2026 age-verification policy statement ties enforcement discretion to purpose limitation, vendor controls, prompt deletion, notice, security, and reasonable accuracy. None of those can be delivered by one team alone. If product ships a blunt flow, procurement signs a loose vendor contract, or security treats raw verification data like ordinary account metadata, the legal design falls apart in practice.

The same point runs through the FTC’s 2025 COPPA rule updates. The rule now emphasizes data minimization and limits on retention, while the final rule text also expands “personal information” to include government-issued identifiers and biometric identifiers used for automated or semi-automated recognition. That is why age verification cannot be treated as a narrow front-end feature. It is a shared operating model. If the teams do not align before launch, the system will collect too much, keep it too long, or expose it to too many hands.

Conclusion

Age verification is not a legal win by itself. It becomes defensible only when the company can show that the check is proportionate to the actual product risk, that the output is narrower than full identity proofing, and that the data is tightly controlled from collection through deletion. That is the through line in both the FTC’s February 2026 policy statement on age-verification technology and NIST’s Digital Identity Guidelines.

For in-house counsel, the practical answer is not “verify age everywhere.” It is “match the method to the risk, collect only what the decision requires, and make sure the program cannot drift into a broader identity, analytics, or vendor-retention system.” That approach also fits the direction of the FTC’s 2025 COPPA rule updates, which reinforced limits on how children’s data is collected, used, and retained.

If you want, I can now assemble all approved blocks into one clean article in the same style, without changing the wording.